Introduction

- An identical naming convention for all Elasticsearch-based projects

- The entire Elastic suite is adopting this nomenclature (beats, logstash, APM, …)

- The proposed field naming is both simple and clear.

- It makes it easier to design common Kibana dashboards acting on different sources of data

- It simplifies the user experience navigating from one domain to another: cybersecurity, network, application, cloud resources etc..

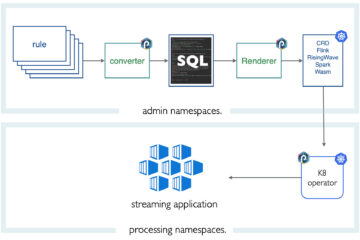

ECS and the Punch

Punch ECS Integration

Where the ECS punchlet is depicted in red. It cannot be simpler.

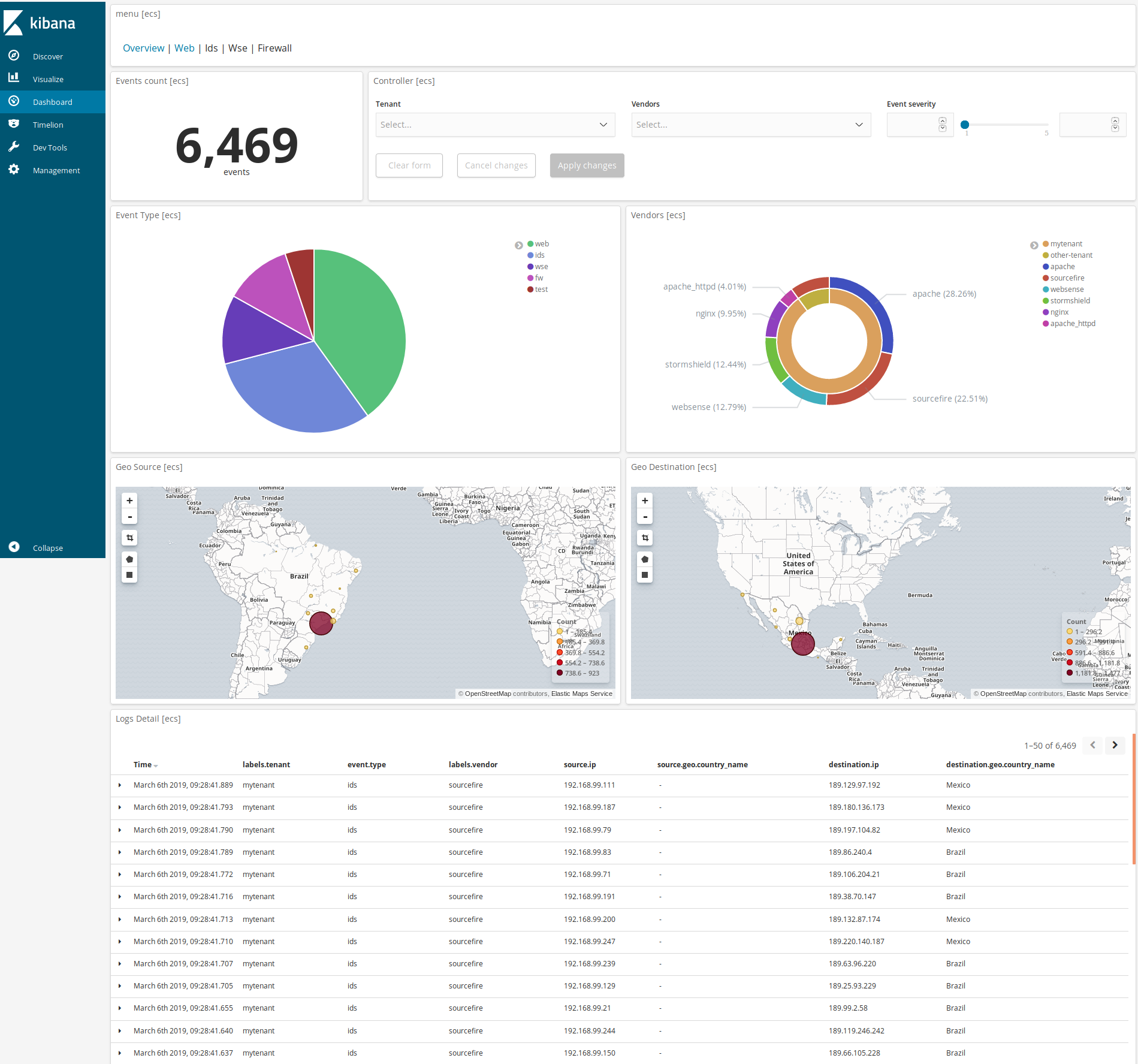

ECS in Action

Let us see ECS in action. Once ECS data has been ingested in Elasticsearch, here is a standard and simple dashboard (one available on the Punch market place). It illustrates how various log data coming from different sources can all be visualised on a common dashboard. Note that this is ready to be demonstrated on a punch standalone distribution.

Feb 21 10:48:35 host2 189.134.68.95 - alice [31/Dec/2012:03:00:00 +0100] "GET /software/winvn/index.php?q=3#article HTTP/1.0" 404 8368 "http://www.example.com/start.html" "Mozilla/5.0 (Linux; Android 5.1.1; Nexus 5 Build/LMY48B; wv) AppleWebKit/537.36 (KHTML, like Gecko) Version/4.0 Chrome/43.0.2357.65 Mobile Safari/537.36"

Here are the fields:

| Converted to ECS | Original Punch fields | Value |

| ecs.version | – | v1.0.0-beta2 |

| labels.channel | channel | apache |

| labels.tenant | tenant | mytenant |

| labels.vendor | vendor | apache_httpd |

| event.type | type | web |

| event.action | action | Not Found |

| event.created | obs.ts | 2012-12-31T03:00:00.000+01:00 |

| event.original | message | Feb 21 10:48:35 host2 189.134.68.95 – alice [31/Dec/2012:03:00:00… |

| event.alarm.id | alarm.id | 160018 |

| event.severity | alarm.sev | 2 |

| source.address | – | 189.134.68.95 |

| source.ip | init.host.ip | 189.134.68.95 |

| source.geo.city_name | init.usr.loc.cty_short | Mexico City |

| source.geo.country_iso_code | init.usr.loc.country_short | MX |

| source.geo.country_name | init.usr.loc.country | Mexico |

| source.geo.location.lat | init.usr.loc.geo_point[1] | 19.4342 |

| source.geo.location.lon | init.usr.loc.geo_point[0] | -99.1386 |

| source.user.name | init.usr.name | alice |

| observer.hostname | obs.host.name | host2 |

| http.request.method | web.request.method | GET |

| http.request.referrer | web.header.referer | http://www.example.com/start.html |

| http.response.body.bytes | session.out.byte | 8368 |

| http.response.status_code | web.request.rc | 404 |

| http.version | web.header.version | 1.0 |

| url.path | target.uri.urn | /software/winvn/index.php?q=3#article |

| url.query | – | q=3 |

| url.fragment | – | article |

| user_agent.original | web.header.user_agent | Mozilla/5.0 (Linux; Android 5.1.1; Nexus 5 Build/LMY48B; wv … |

| – | parser.name | apache_httpd |

| – | parser.version | 1.2.0 |

| – | col.host.name | punch-elitebook |

| – | lmc.parse.host.ip | 127.0.0.1 |

| – | lmc.parse.host.name | punch-elitebook |

| – | lmc.parse.ts | 2019-03-06T14:30:05.552+01:00 |

| – | obs.ts | 2012-12-31T03:00:00.000+01:00 |

| – | rep.host.name | host2 |

| – | rep.ts | 2019-02-21T10:48:35.000+01:00 |

| – | size | 325 |

What Next?

The punch parsers are provided as modules. They can be deployed in various pipelines. Leveraging the ECS format the punch will make their customers themselves pay much more attention to their data normalization. And in turn, they will immediately benefit from the Elastic ecosystem. Well, this actually is already the case, we now deploy solutions based on beats rather than any other metric or log agents for various Thales applications.

And because they do that, they can now execute machine learning processings on top of their data using the Punch PML feature.

Stay tuned for more news on this soon.

Guillaume Fayemi, Dimitri Tombroff

Thanks for reading our blog.

Questions ? contact@punchplatform.com