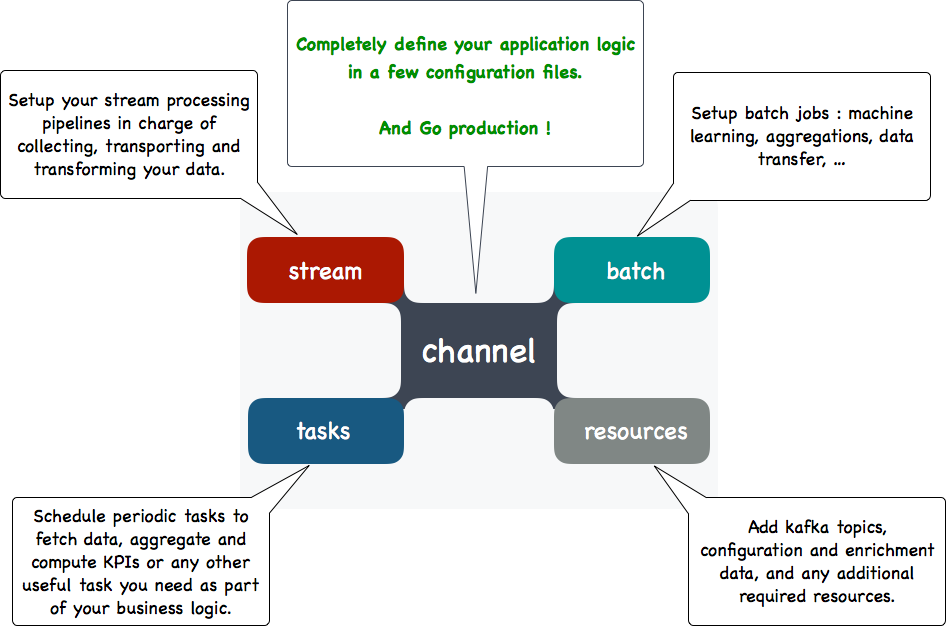

Context

While working on a surveillance solution an interesting problem arose: an analysis component had to be built which had to be light, robust and highly adaptative. This component consists of a set of sequential or parallel tasks, each providing a unitary treatment. The solution is built on top of the Punchplatform the use of its embedded technologies (ex: storm) had been considered, but after careful consideration, Kafka Streams had been chosen.

Sample topology for a signal analysis solution

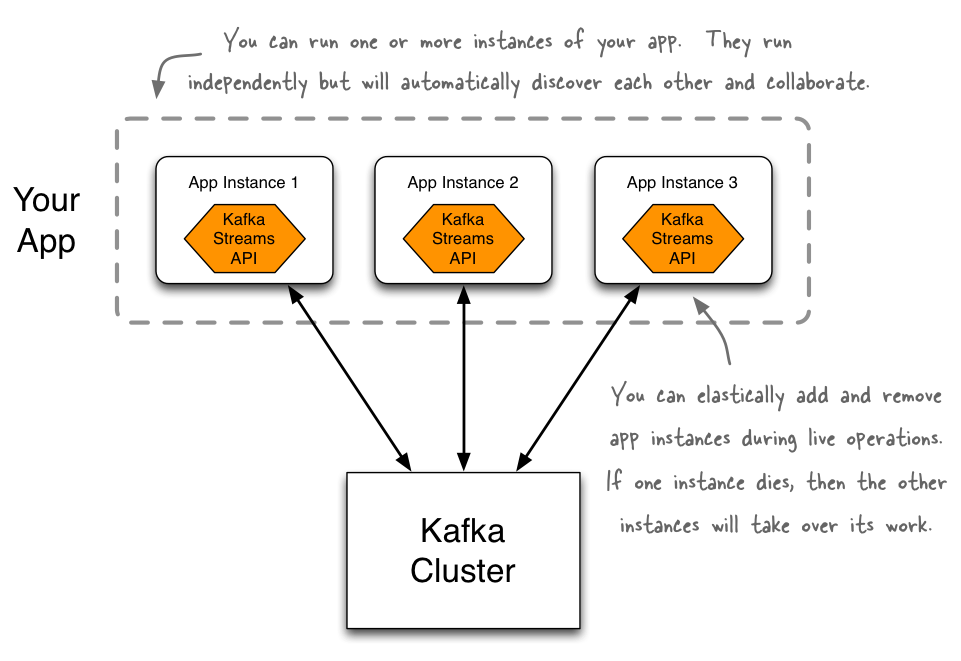

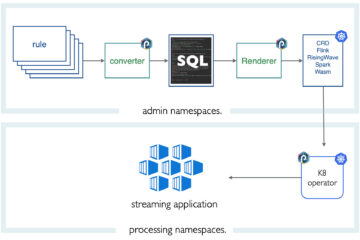

Kafka Streams is a client library for building applications where the input and output data are stored in a Kafka cluster. It combines the simplicity of writing and deploying standard Java and Scala applications on the client side with the benefits of Kafka’s server-side cluster technology.

As our surveillance solution was built upon the Punchplatform we decided to have our analysis component configured, launched and monitored by said Punchplatform. A kafka-streams sample application had to be implemented to prototype this.

Implementation

In order to validate the concept the sample application had to do three things :

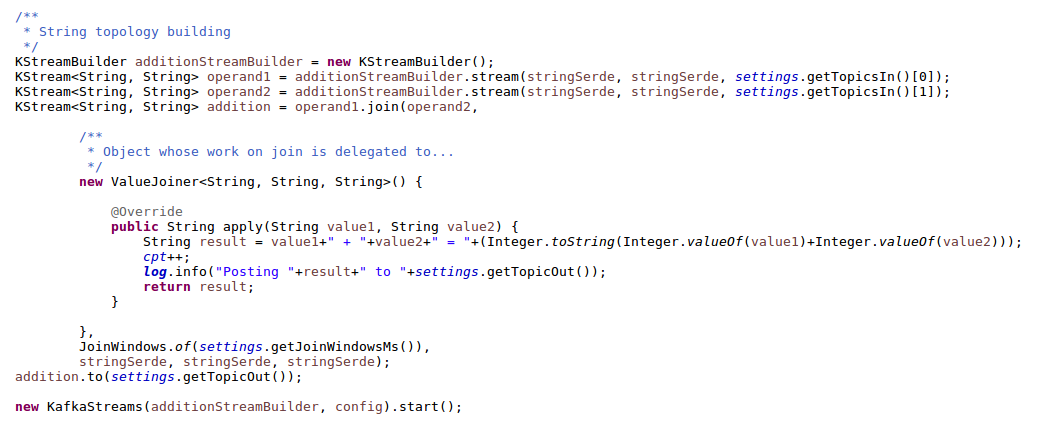

- Perform a sample treatment with a join.

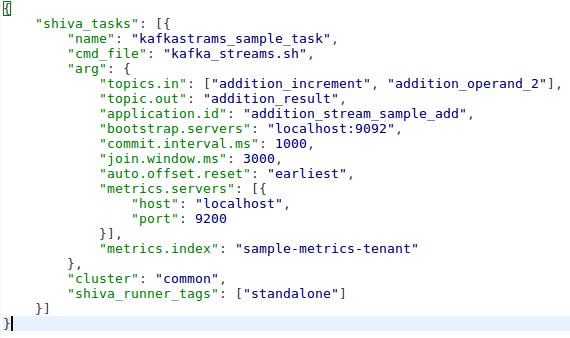

- Be configured and launched by the Punchplatform.

- Be monitored by the Punchplatform.

All we had to do to fulfill this contract was to create a maven application and :

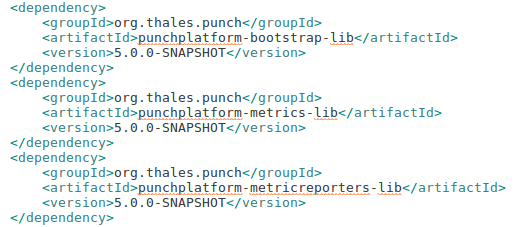

- Add the punchplatform dependencies to be able to be called by the punch and send metrics to it.

- Implement a sample operation to launch and monitor.

- Create a configuration file and launch script.

That’s it.

Result

The resulting application is an executable jar launch by a script integrated into the Punchplatform. It is monitored: its statistics are directly sent to Elasticsearch. It is robust and distributed, as the punchplatform is now responsible for the distribution and the execution of the application. Finally, it is adaptative, as the punchplaform deployment solution allows us to rebuild our topology, add or remove specifics tasks with the change of just a configuration file.

The complete sample can be found here.

Conclusion

This proof of concept illustrates one of the key interests of the punchplatform: it has the ability to integrate virtually any technology and provide with very little work all the deployment and monitoring features that are required in a production environment. This greatly eases the work of the developers allowing them to focus on business-oriented parts of their application.

1 Comment

Is Logstash production ready ? - Punchplatform · September 23, 2018 at 08:49

[…] a lightweight process scheduler to let you safely run single-process pipelines. In a previous blog, we explained how simple this is to deploy KafkaStream application. The same holds for any […]

Comments are closed.